EY refers to the global organization, and may refer to one or more, of the member firms of Ernst & Young Global Limited, each of which is a separate legal entity. Ernst & Young Global Limited, a UK company limited by guarantee, does not provide services to clients.

As AI moves from “thinking” to “doing,” businesses must do more than give demonstrations or run pilots. They will gain a competitive advantage if they can deploy, operate and refine physical AI in a real-world environment.

In brief

Physical AI is moving AI from thinking to doing, to become operational infrastructure in the physical economy which will transform uptime, safety, quality and resilience in factories, warehouses and operations.

How should companies leverage physical AI as operational infrastructure? Why does this matter now?

Generative AI has accelerated productivity in knowledge work — ideation, analysis, development and customer interactions — but a substantial share of value creation still sits in the physical economy: manufacturing, logistics, construction, maintenance, retail operations, healthcare and infrastructure.

Physical AI is the bridge from digital output to autonomous action in the real world, enabling systems to perceive context, reach decisions within a defined scopes and operate safely over time.

In parallel, there is already momentum for automation at a global scale: the implementation and utilization of industrial robots have reached near record levels and continue to grow, underlining the long-term trajectory for more automated operations.

There is no longer a debate about increasing the adoption of robots. The question is how to make autonomy practical, scalable and governable in real-world operations.

What is physical AI?

Physical Artificial Intelligence (AI) refers to a system which can act autonomously in a cycle perception, decision and action in robots, machines, drones and smart-edge devices in the real world.

It senses environments (e.g., cameras, LiDAR, force/tactile sensors), makes decisions based on defined goals and within safety/quality constraints, and uses actuators to move, pick, assemble, inspect and assist.

What has changed? There is a shift from fixed, rule-based automation to systems that can handle variability and exceptions which are the messy reality of operations.

Why are four forces converging?

Physical AI is accelerating because of improvements to multiple enabling layers:

- Multimodal progress

Models are increasingly able to integrate vision, video and other signals to interpret a real-world context which is the foundation of operational autonomy.

- Simulation and synthetic data

Training and validation can be simulated to reduce real-world trial costs and safety risks. Modern robotics pipelines increasingly rely on high-fidelity simulation and synthetic data generation to cover edge cases and accelerate iteration.

- Edge computing for real-time autonomy

Time-sensitive robots benefit from reduced latency and local processing at the network edge, enabling faster response and more reliable autonomy in dynamic settings.

- Scaling industrial deployment and hardware progress

Industrial deployments continue at a global scale, supporting improvements in components, integration patterns and operational know-how.

However, these technical shifts are meeting management realities: labor constraints, downtime costs, safety requirements and supply-chain volatility which push leaders towards a “sustainable operational capability,” going beyond simple labor substitution.

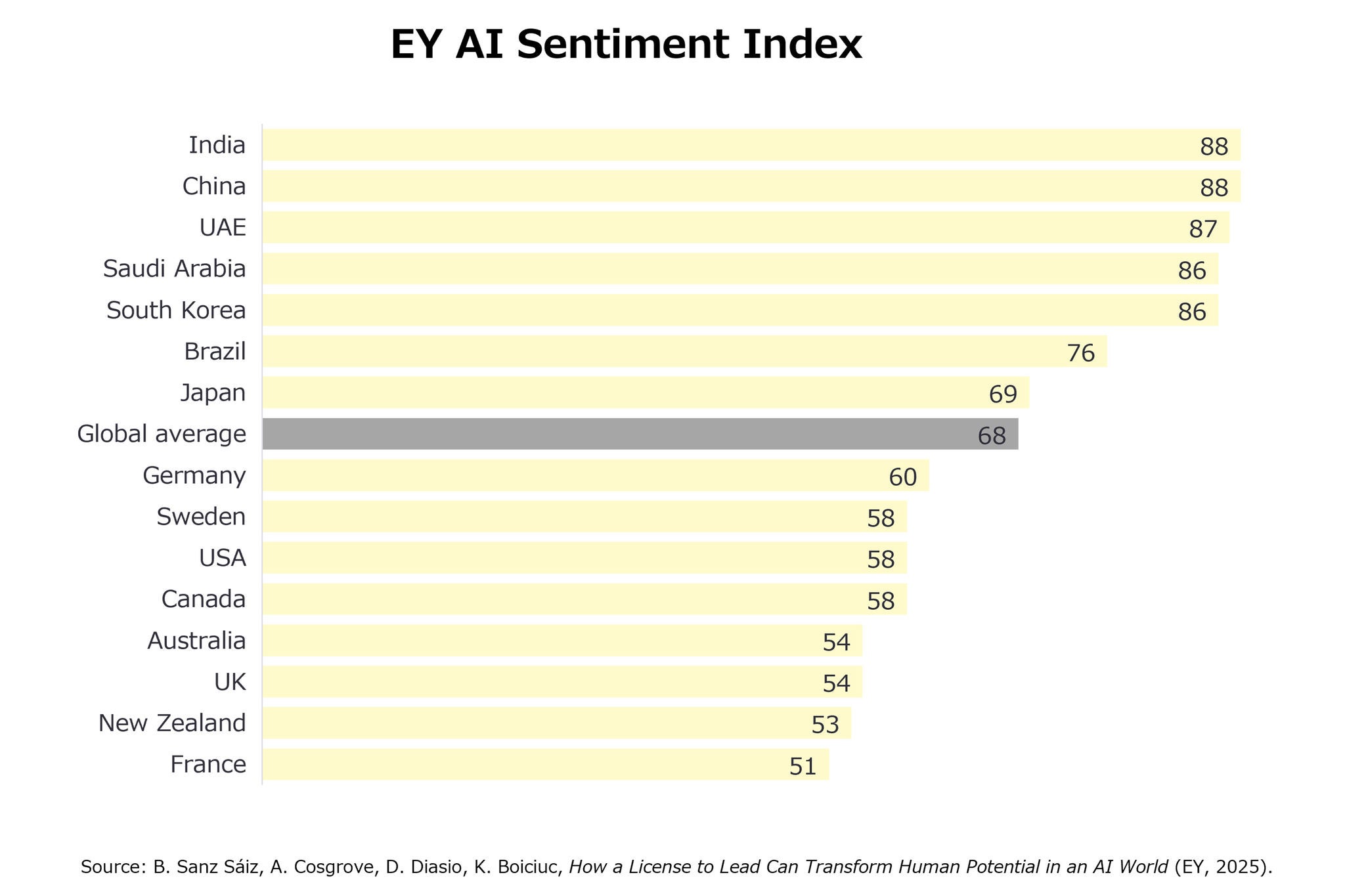

Figure 1: EY AI Sentiment Index. Levels of comfort with AI vary significantly by country

The EY AI Sentiment Index is a composite measure based on four values: expectations of AI, perceived impact on a country, perceived impact on daily life, and comfort with AI. Scores are shown as a 0–100 country average..

While technology is improving at pace, adoption is not uniform across all markets. The EY AI Sentiment Index, which combines expectations of AI, perceived impact on a country and daily life, and comfort with AI, shows wide differences in attitudes to AI. Scores range from the low 50s in some markets (e.g., France at 51, New Zealand at 53, the UK and Australia at 54) to the high 80s in others (e.g., China and India at 88). The global average is 68, with Japan at 69 and the US at 58.

Reframe the opportunity: physical AI as operational infrastructure

Many organizations continue to view physical AI as a robot procurement issue. That framing misses the bigger strategic question. When physical AI is embedded in factories, warehouses and operations, it begins to resemble operating infrastructure with the ability to shape competitive outcomes through uptime, throughput, quality, safety and resilience.

It also changes where value accumulates. The physical AI economy is not only robot hardware. It includes:

- Hardware platforms and key components

- Software/OS, AI models and orchestration

- Systems integration, process redesign and change management

- Operations such as remote monitoring, updates, preventive maintenance and continuous improvement

As adoption increases, the advantage shifts from who is building the machine to who can deploy, operate and improve it in the real world.

A reality check: value is often probability improvement, not perfection

There is still a material gap between demonstrations and scaled operations. In practice, near-term value often comes from raising task completion rates in variable environments: this equates to moving from “mostly automated” scenarios to “almost always handled,” while reducing human involvement to supervision and exception handling.

This is why many successful deployments can be found in controlled environments (e.g., warehouses, factories) where variables can be managed and operations can be standardized.

Five obstacles to scaling a pilot

If a business wants to scale physical AI, it must be ready to clear five obstacles at the same time. If even one obstacle remains, businesses risk a proof of concept plateau where the proliferation of pilots is not matched by production scale.

1) Capital intensity (Capex + R&D + deployment spend)

Physical AI is more than R&D. It requires sustained investment across models, robotics platforms, site retrofits, training and safety engineering.

2) Key technology maturity (the “head, limbs and body” gap)

Progress is uneven across the stack. We can use the human body as a metaphor:

- Head: multimodal understanding, planning and world models

- Limbs: dexterity, precision control, durability and redundancy

- Torso: power, weight, thermals, runtime and charging infrastructure

In many deployments, the limbs and body which are responsible for reliability, maintenance, runtime are greater constraint on scaling than the head” capability.

“Two things often decide whether physical AI works in practice: firstly, the ‘in-the-field’ know-how to handle sensor data consistently — across devices, preprocessing differences, and inevitable dropouts in real time. Secondly, whether robot foundation models can truly scale — reaching a point where robots can be guided more by instructions than by constant retraining.”

— Kyota Seki, Director, Business Development, AI Products & Solutions, Preferred Networks

3) Viable use cases (business-realistic, ops-sustainable)

The question is no longer “can we automate it?” but “can we run it on a daily basis in a safe and predictable manner?” Near-term traction often comes from B2B use cases with measurable value and repeatable deployment patterns.

4) Cost that can scale (total cost of ownership, not purchase price)

Scaling depends on end-to-end economics across development (data/simulation/validation), manufacturing (components), deployment (integration/training/retrofits) and operations (monitoring/maintenance/model updates).

“Ideally, most of what a robot does should be processed locally, on the edge. The cloud should be reserved for something a single device can’t decide, like fleet-level optimization. However, current model size and battery constraints still make fully edge-native approaches difficult, so the practical answer is to increase edge processing while managing latency and connectivity costs with the right network design.”

— Kyota Seki, Director, Business Development, AI Products & Solutions, Preferred Networks

5) Safety and regulation (the “last mile” of real-world deployment)

As robots operate in ever closer contact with people, society’s requirements are also greater: safety standards, accountability, privacy, auditability and traceability. The winning solution is not regulation avoidance, but building proof mechanisms — verification, logging, monitoring and governance — that make risk manageable at scale.

“Safety has to be designed from the start. Depending on the use case, you need to decide early whether you’re optimizing for fail-safe shutdown or fail-operational continuity. And if AI models are involved in control, guardrails must prevent extreme outputs that could violate safety requirements. You can’t bolt this on at a later date once systems are already in the real world.”

— Kyota Seki, Director, Business Development, AI Products & Solutions, Preferred Networks

Where companies can win: design for deployment and operations

Across all sectors, durable advantage will come from combining technology with operational design:

- Start with task-focused systems, not generalized robots

General-purpose autonomy is the aspiration; task-focused autonomy is the commercialization path. Early use cases should be chosen for repeatability, measurable value and scaling potential across sites.

- Engineer human-in-the-loop operations intentionally

“Early deployments are not about complete automation. The real design challenge is deciding when people step in — for supervision, exception handling, or approvals — and making those interventions explicit and controllable.”— Kyota Seki, Director, Business Development, AI Products & Solutions, Preferred Networks

- Treat data as the limiting reagent

High-performing systems require AI-ready data which is reliable, accessible, contextual and governed. Simulation and synthetic data can help, but scaled operations also require continuous capture of real operational data (failures, near-misses, maintenance history) to power improvement loops.

- Build a simulation-first, deployment-safe pipeline

Digital twins and robotics simulation allow teams to test edge cases, validate safety behavior and accelerate iteration before physical deployment.

- Be the architect of edge reliability and resilient system behavior under failure

Robots cannot depend on perfect connectivity. Pushing time-critical perception and control to the edge while using the cloud for fleet optimization is often the pragmatic balance for latency, cost and reliability.

Conclusion

Physical AI is not simply more robots. It is a shift towards an operating infrastructure where AI can execute work in a reliable manner in the real world. Winners will treat deployment, safety and operations as first-class design constraints and build improvement loops that turn real-world variance into enduring advantage.

Generative AI has mad thinking broadly accessible; physical AI has the potential to make doing more scalable, provided that organizations invest as much in operationalization as they do in innovation.

【Authors】

EY Strategy and Consulting Co., Ltd.

Technology, Media & Entertainment, and Telecommunications (TMT)

Director Yoshio Takechi

Intelligence Unit Consultant Kyna Tsai

Summary

Physical AI is moving from the pilot phase to operational infrastructure. We examine five obstacles to scaling and how to achieve success with deployment, operations, safety and data.